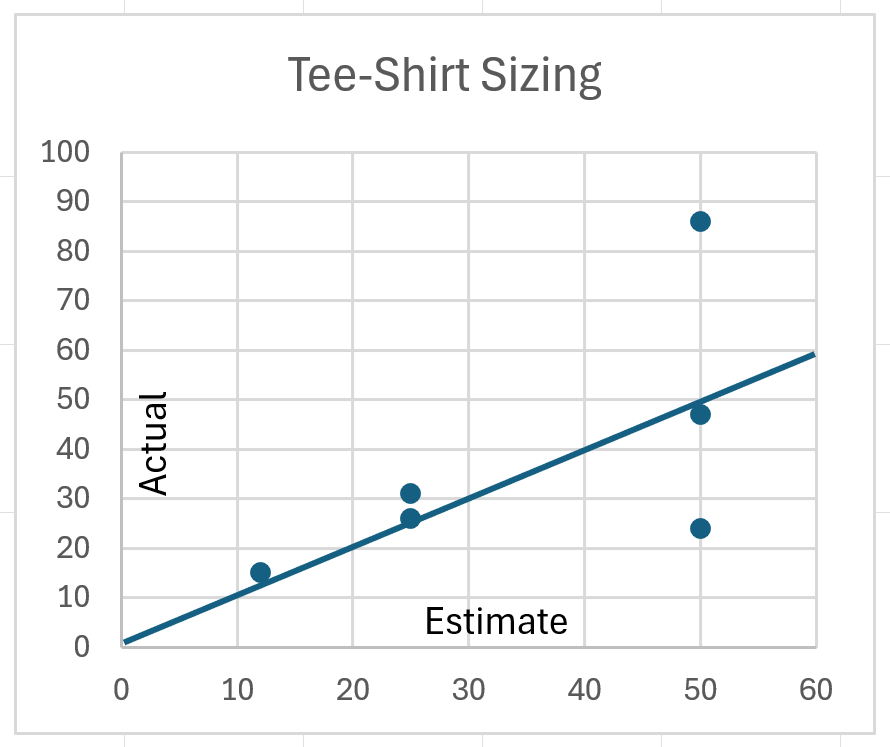

With six cases now in the log, I’d say the bot estimates about as well as humans—and codes with greater knowledge.

And we’ve pair-learned together, which is an achievement in itself.

1. Thrashing

Several prompts without progress: it happens to humans too. I asked the bot to suggest a reset when this occurred. In one large-size task it happened twice, and I asked why it hadn’t noticed.

It replied with a revelation, that every prompt felt normal to it and it didn’t get frustrated like humans. Virid insight!

In both cases I documented the issue carefully and conducted a design review, which led to resolution. We agreed that three prompts without progress would trigger a reset.

2. Logic design

Humans are both blessed and cursed by their ability to believe bad or incomplete logic. Blessed—because they will attempt the seemingly impossible. Cursed—because they must change their belief to reach their goals.

At one point I saw that the code was working but the result was wrong: bad logic. I didn’t ask the bot for help. You don’t really understand something until you can explain it to someone else.

If I could explain the needed change to the bot, I could write the logic myself.

For the bot to recognize that the problem statement itself needed changing would be super-intelligence. If it can’t recognize thrashing, we’re not there yet.

It took a couple of resets for me to get the logic right—sometimes it’s better to pivot than to persevere.

3. Serendipity

I’ve started writing pseudocode in VSCode using a file extension to get IntelliSense and syntax help. I noticed that my bot’s friend, Copilot, began suggesting the next statements automatically based on context. Very helpful.

Sometimes the suggestions were wrong, but I kept doing it. Generally, I work on a fragment of pseudocode and paste it into live code to finish it.

Next step: automated testing—to complete the cycle.